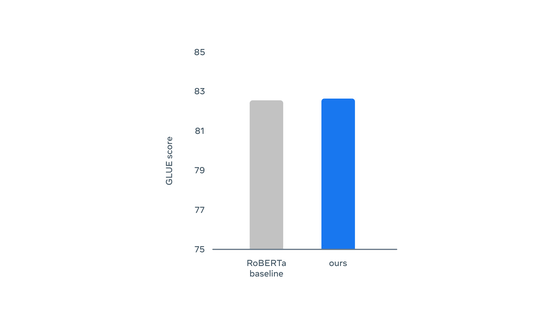

Regarding natural language understanding, it was almost the same as RoBERTa, which is an improved version of BERT. Lost to wav2vec 2.0 and HuBERT for voice detection. Next, the students are asked to perform the work of 'predicting and filling the hidden part', and by repeating this, self-learning will be deepened.ĭata2vec seems to get better results than existing algorithms when it comes to image recognition. In this paper, we introduce AV-data2vec which addresses these challenges and builds audio-visual representations based. However, existing methods are either not entirely end-to-end or do not train joint representations of both modalities. First, AI is divided into two roles, 'teacher side' and 'student side', and on the teacher side, the work of 'turning down a part of the theme' such as blackening the dog's face if it is a dog image is done. Self-supervision has shown great potential for audio-visual speech recognition by vastly reducing the amount of labeled data required to build good systems. The specific training method is as follows. By focusing on layers, a single algorithm can handle different types of input data. Under these circumstances, 'Data2vec' newly developed by Meta is a self-learning AI that functions in the same way in different fields, and is a neural network for images, sentences, and voices. Request PDF data2vec: A General Framework for Self-supervised Learning in Speech, Vision and Language While the general idea of self-supervised learning is identical across modalities, the. However, according to Meta, even if the purpose of 'development of AI that learns by oneself' is common, the approach has continued to differ greatly depending on the fields of language, image, and voice. Therefore, the current research is focusing on the development of 'AI that humans can learn by themselves without teaching correct or incorrect answers'. However, there is the problem that it is virtually impossible for humans to prepare labels in advance for everything that AI wants to learn. nlp deep-learning sentiment-analysis question-answering sentiment-classification self-supervised-learning data2vec. The goal is to compare it to unimodal self-supervised models on Question Answering and Sentiment Classification tasks. Introducing the First Self-Supervised Algorithm for Speech, Vision and Text | MetaĪccording to Meta, most of the AI developed as of 2022 is supervised learning in which humans learn based on the labels of correct and incorrect answers that are attached in advance. The project is centered around data2vec model (first multi-modal self-supervised algorithm). Meta, which operates Facebook, announced that it has developed a self-learning AI 'Data2vec' that can be adapted to all fields.ĭata2vec: The first high-performance self-supervised algorithm that works for speech, vision, and text

13:00:00 Meta announces self-learning AI 'data2vec' that can adapt to multiple fields such as language, image, voice

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed